Overdeveloped

I'm Nick DiMucci, founder and head developer of MindShaft Games. This is a blog mostly about game development with some software engineering sprinkled in.

Monday, November 17, 2014

Yet Another Addendum: 2D Platformer Collision Detection in Unity

You can see the bug in the video below. Just fast forward to the 1:07 mark and watch the Angel in the lower right corner.

It actually took me a long time to come up with a fix for this, even though now it's such a simple and obvious fix. Without reiterating all of the details of the collision system, I essentially cast rays towards the directions the player is moving. If the player is moving left, I cast evenly spaced horizontal rays from the box collider (4 in this game's case). To cover the corner of the box collider, I use a margin variable to cast ever so slightly out of the box collider bounds; refer to my previous posts on this for further detail.

This was causing the snagging issue because while the player's box collider wasn't actually colliding the tile (and it doesn't really appear to be either in the game), a collision was still being detected due to the margin. By itself, this isn't too bad, but we'd also get a y-axis collision detected, and this, along with a x-axis collision, would cause the snagging/jittering effect.

The ideal solution is to reduce the margin of the raycasts so that we're not casting outside of the box collider, and still guarding against corner collisions. Thus, we introduce diagonal raycasts from the corners of the box collider!

Here is the new code in all its glory.

Note the Move method and when we perform the diagonal raycasts. We only want to perform them when the player is moving through the air, by checking that the player is moving in both x and y axes, not in a a side collision nor on the ground. We then perform a simple raycast (always with the origin in the center of the collider) in the direction the player is moving. When a corner is hit, we simply stop the x-axis movement.

A simple solution to a problem that was haunting me for a while.

Wednesday, October 15, 2014

Duke Nukem 3D - Game Tutorial Through Level Design

Before we dive in, we need to remember that Duke Nukem 3D was released almost 19 years ago (damn, we're all getting old :( ). The first person shooter genre was just being born out of prior games such as Wolfenstein 3D and Doom. Duke Nukem 3D, at the time, was a large leap forward in the genre, offering true 3D play as players could traverse the Y-axis through jumping and jetpacking and incredibly expansive, detailed levels and interactivity. Duke3d needed to let the player know this isn't Doom they were playing!

We're going to walk through just the first area of Hollywood Holocaust, and how the level design is used to teach the player about the new mechanics available to him, both as a player who's played Doom, and a player new to the FPS genre entirely.

The game starts with Duke jumping out of his ride (damn those alien bastards!). Immediately, Duke is airborne. He doesn't start grounded, letting gravity pull him down the Y-axis. This immediately tells the player that there's a whole new axis of gameplay available to you. You will not be zipping around just the X and Z axes. This is further emphasized by the fact that you land on a caged in roof top. There's only one place to go but down!

The player is left to roam the enclosed rooftop. The rooftop is seemingly bare at first, but rewards the player for exploring beyond the obvious path with some additional ammo hidden behind the large crate. Exploration and hidden areas is a large part of Duke3d's gameplay, and this is a subtle, yet effective way of communicating that to the player.

Next, the player will come across a large vent fan, taped off, with some explosive barrels conveniently placed next to it. The game literally cannot continue until the player figures out the core mechanic of the game, shooting. Not only is the mechanic of shooting being taught, but also the mechanic of aiming at your target. This is all done at a leisurely, comfortable pace for the player. Imagine if that there was an enemy guarding the air vent? For a player new to the genre (and back in 1996, it was very common to have someone play this game who's never played a FPS before, not even Doom), it would have been very overwhelming and probably a guaranteed player death.

Once the player figures out aiming and shooting, they also are taught another core mechanic of the game, puzzle solving. Solving little environmental based puzzles will be common going forward, so the player needs to be taught to be aware of their surroundings and understand it is interactive and interactivity will be key to success.

It's fascinating to me that the core of the game is taught to the player in such little time, with such seemingly simple level design.

Thursday, August 28, 2014

Using Jenkins with Unity

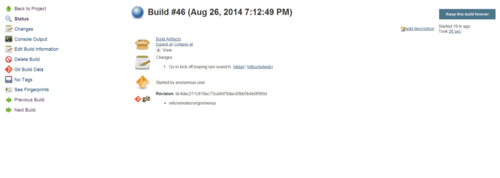

Going off my last post where I used a batch script to automate Unity builds, I decided to take it a step further and integrate Jenkins, the popular CI sofware, into the process.

With Jenkins, I can have it poll the Demons with Shotguns git repository, and have it detect when changes are made, in which it'll perform a Unity build (building the Windows target) and archive the build artifacts.

What's great about this is that I can clearly see which committed changes relate to which build, helping me identify when, and more importantly where, a new bug was introduced.

I currently have it set to keep a backlog of 30 builds, but you can potentially keep an infinite number of builds (limited to your hard drive space, of course).

So how do you configure this? Assuming you have Jenkins (and the required source control plugin) installed already, create a new job as a free-style software project. In the job configuration page, set the max # of builds to keep (leave blank if you don't want to limit this). In the source code management section, set it up accordingly to whichever source control software you use (I'm using git). This section, and how you set it up, is going to vary greatly depending on which source control software you use.

Under build triggers, do Poll SCM and set the appropriate cron job syntax based on how frequently you want to poll the source repository for changes.

Under the build section, add a Execute Windows batch command build step. You then script up which targets you want to build (you can use the script in my previous post as a template).

Under post-build actions, add Archive the artifacts. In the files to archive text box, setup the fileset masks you want. For a standalone build it would look like "game.exe,game_Data/**".

That's it! I do know that there is a Unity plugin for Jenkins that'll help run the BuildPipeline without having to write a batch script but I never had success in getting it running so I just went this route.

Automating Unity Builds

I wanted a way to automate building Unity projects from a command line, but to also commit each build to a git repo so I can keep track of the builds I make (in case something breaks, I can go back to previous builds and see where it might of broken). This is my poor man's CI process.

Here's the script in all its glory.

As you can see, it's nothing special. Simply plug in the path to your project and where you want to the exe to spit out. Adding other build targets is trivial as well. Best part of this, it doesn't require the Pro version of Unity at all!

This solution is temporary. I'm going to wrap this in Jenkins so that it'll detect git commits then build and archive the game's .exe. More on that soon!

Monday, July 7, 2014

How to program independent games by Jonathan Blow - A TL;DR

This is a talk about productivity, about getting things done, more than anything. Programmers that get their computer science degree are often taught how to optimize code, but this generally comes at a great expense to productivity. Indie game devs have to wear several hats (if not all of the hats), so time is too precious to be wasted.

There are several examples Blow goes through to illustrate this, some of which I think are very weak arguments given modern APIs (I'm referring to his hash table vs. arrays argument), but the majority of them are spot on. One that stood out in particular is the urge to make everything generic, when it may not be necessary. More often than not, a method you're writing will be a one off, only used to perform some type of action on one type of object, so time is absolutely wasted trying to make that method work on an entire hierarchy of objects.

The biggest take away is simply this: the simplest solution to implement is almost always the correct one. Get it done. Move on. Fix it/optimize it only when you absolutely need to.

Monday, April 21, 2014

Creating a flexible audio system in Unity

Playing only one audio clip at a time can be a problem in scenarios where you have an AudioSource attached to a prefab, your Player for example, and you have multiple audio clips you'd like to be played in succession. Your player jumps, so you want to play a jumping sound effect, but within the time that jumping sound effect is playing, they get hit by something, so you swap out the audio clip and play a player hit sound effect, but if you're trying to use a single AudioSource, that'll cut off the currently playing jumping sound effect. It'll sound bad, jarring and confusing to the player. Most obvious solution is to simply attach a new audio source for every audio clip you'd like to play. That may get nightmarish if you end up having a lot of possible audio clips to play.

My solution has been to create a central controller that'll listen for game events to spawn and pool AudioSource game objects in the scene at a specified location (in case the audio clip is a 3D sound), load it with a specified AudioClip, and play it, and return the instance back to the object pool for later use. This allows you to play multiple audio clips at a single location, at a single time, without cutting each other off. You also get the benefit of keeping your game prefabs clean and tidy.

I'm always reluctant to share my code because I use StrangeIoC, which not everyone is using (though you probably should!) and the code structure may seem alien, but a keen developer should be able to adapt the solution to their needs. Let's go through a working example.

I've attempted to comment this Gist well enough so that people who aren't familiar with StrangeIoC can still follow along. The basic execution is

- Player is hit, dispatch a request to play the "player is hit" sound effect

- This is a fatality event, dispatch a request to also play the "player fatality" sound effect

- PlaySoundFxCommand receives both events

- For each separate event, attempt to obtain an audio source prefab from the object pool. If one is not available, it will be instantiated

- If the _soundFxs Dictionary doesn't already have a reference to the requested AudioClip, load it via Resources.Load and store reference for future calls

- Setup the AudioSource (assign AudioClip to play, position, etc)

- Play the AudioSource

- Start a Coroutine to iterate every frame while the AudioClip is still playing

- Once the AudioClip is done, deactivate the AudioSource and return it back to the object pool